Running with nthreads = 4

DataSetInfo : [dataset] : Added class "Signal"

: Add Tree sig_tree of type Signal with 1000 events

DataSetInfo : [dataset] : Added class "Background"

: Add Tree bkg_tree of type Background with 1000 events

Factory : Booking method: ␛[1mBDT␛[0m

:

: Rebuilding Dataset dataset

: Building event vectors for type 2 Signal

: Dataset[dataset] : create input formulas for tree sig_tree

: Using variable vars[0] from array expression vars of size 256

: Building event vectors for type 2 Background

: Dataset[dataset] : create input formulas for tree bkg_tree

: Using variable vars[0] from array expression vars of size 256

DataSetFactory : [dataset] : Number of events in input trees

:

:

: Number of training and testing events

: ---------------------------------------------------------------------------

: Signal -- training events : 800

: Signal -- testing events : 200

: Signal -- training and testing events: 1000

: Background -- training events : 800

: Background -- testing events : 200

: Background -- training and testing events: 1000

:

Factory : Booking method: ␛[1mTMVA_DNN_CPU␛[0m

:

: Parsing option string:

: ... "!H:V:ErrorStrategy=CROSSENTROPY:VarTransform=None:WeightInitialization=XAVIER:Layout=DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,DENSE|1|LINEAR:TrainingStrategy=LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.,MaxEpochs=10:Architecture=CPU"

: The following options are set:

: - By User:

: <none>

: - Default:

: Boost_num: "0" [Number of times the classifier will be boosted]

: Parsing option string:

: ... "!H:V:ErrorStrategy=CROSSENTROPY:VarTransform=None:WeightInitialization=XAVIER:Layout=DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,DENSE|1|LINEAR:TrainingStrategy=LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.,MaxEpochs=10:Architecture=CPU"

: The following options are set:

: - By User:

: V: "True" [Verbose output (short form of "VerbosityLevel" below - overrides the latter one)]

: VarTransform: "None" [List of variable transformations performed before training, e.g., "D_Background,P_Signal,G,N_AllClasses" for: "Decorrelation, PCA-transformation, Gaussianisation, Normalisation, each for the given class of events ('AllClasses' denotes all events of all classes, if no class indication is given, 'All' is assumed)"]

: H: "False" [Print method-specific help message]

: Layout: "DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,BNORM,DENSE|100|RELU,DENSE|1|LINEAR" [Layout of the network.]

: ErrorStrategy: "CROSSENTROPY" [Loss function: Mean squared error (regression) or cross entropy (binary classification).]

: WeightInitialization: "XAVIER" [Weight initialization strategy]

: Architecture: "CPU" [Which architecture to perform the training on.]

: TrainingStrategy: "LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.,MaxEpochs=10" [Defines the training strategies.]

: - Default:

: VerbosityLevel: "Default" [Verbosity level]

: CreateMVAPdfs: "False" [Create PDFs for classifier outputs (signal and background)]

: IgnoreNegWeightsInTraining: "False" [Events with negative weights are ignored in the training (but are included for testing and performance evaluation)]

: InputLayout: "0|0|0" [The Layout of the input]

: BatchLayout: "0|0|0" [The Layout of the batch]

: RandomSeed: "0" [Random seed used for weight initialization and batch shuffling]

: ValidationSize: "20%" [Part of the training data to use for validation. Specify as 0.2 or 20% to use a fifth of the data set as validation set. Specify as 100 to use exactly 100 events. (Default: 20%)]

: Will now use the CPU architecture with BLAS and IMT support !

Factory : Booking method: ␛[1mTMVA_CNN_CPU␛[0m

:

: Parsing option string:

: ... "!H:V:ErrorStrategy=CROSSENTROPY:VarTransform=None:WeightInitialization=XAVIER:InputLayout=1|16|16:Layout=CONV|10|3|3|1|1|1|1|RELU,BNORM,CONV|10|3|3|1|1|1|1|RELU,MAXPOOL|2|2|1|1,RESHAPE|FLAT,DENSE|100|RELU,DENSE|1|LINEAR:TrainingStrategy=LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.0,MaxEpochs=10:Architecture=CPU"

: The following options are set:

: - By User:

: <none>

: - Default:

: Boost_num: "0" [Number of times the classifier will be boosted]

: Parsing option string:

: ... "!H:V:ErrorStrategy=CROSSENTROPY:VarTransform=None:WeightInitialization=XAVIER:InputLayout=1|16|16:Layout=CONV|10|3|3|1|1|1|1|RELU,BNORM,CONV|10|3|3|1|1|1|1|RELU,MAXPOOL|2|2|1|1,RESHAPE|FLAT,DENSE|100|RELU,DENSE|1|LINEAR:TrainingStrategy=LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.0,MaxEpochs=10:Architecture=CPU"

: The following options are set:

: - By User:

: V: "True" [Verbose output (short form of "VerbosityLevel" below - overrides the latter one)]

: VarTransform: "None" [List of variable transformations performed before training, e.g., "D_Background,P_Signal,G,N_AllClasses" for: "Decorrelation, PCA-transformation, Gaussianisation, Normalisation, each for the given class of events ('AllClasses' denotes all events of all classes, if no class indication is given, 'All' is assumed)"]

: H: "False" [Print method-specific help message]

: InputLayout: "1|16|16" [The Layout of the input]

: Layout: "CONV|10|3|3|1|1|1|1|RELU,BNORM,CONV|10|3|3|1|1|1|1|RELU,MAXPOOL|2|2|1|1,RESHAPE|FLAT,DENSE|100|RELU,DENSE|1|LINEAR" [Layout of the network.]

: ErrorStrategy: "CROSSENTROPY" [Loss function: Mean squared error (regression) or cross entropy (binary classification).]

: WeightInitialization: "XAVIER" [Weight initialization strategy]

: Architecture: "CPU" [Which architecture to perform the training on.]

: TrainingStrategy: "LearningRate=1e-3,Momentum=0.9,Repetitions=1,ConvergenceSteps=5,BatchSize=100,TestRepetitions=1,WeightDecay=1e-4,Regularization=None,Optimizer=ADAM,DropConfig=0.0+0.0+0.0+0.0,MaxEpochs=10" [Defines the training strategies.]

: - Default:

: VerbosityLevel: "Default" [Verbosity level]

: CreateMVAPdfs: "False" [Create PDFs for classifier outputs (signal and background)]

: IgnoreNegWeightsInTraining: "False" [Events with negative weights are ignored in the training (but are included for testing and performance evaluation)]

: BatchLayout: "0|0|0" [The Layout of the batch]

: RandomSeed: "0" [Random seed used for weight initialization and batch shuffling]

: ValidationSize: "20%" [Part of the training data to use for validation. Specify as 0.2 or 20% to use a fifth of the data set as validation set. Specify as 100 to use exactly 100 events. (Default: 20%)]

: Will now use the CPU architecture with BLAS and IMT support !

Factory : ␛[1mTrain all methods␛[0m

Factory : Train method: BDT for Classification

:

BDT : #events: (reweighted) sig: 800 bkg: 800

: #events: (unweighted) sig: 800 bkg: 800

: Training 400 Decision Trees ... patience please

: Elapsed time for training with 1600 events: 1.32 sec

BDT : [dataset] : Evaluation of BDT on training sample (1600 events)

: Elapsed time for evaluation of 1600 events: 0.0136 sec

: Creating xml weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_BDT.weights.xml␛[0m

: Creating standalone class: ␛[0;36mdataset/weights/TMVA_CNN_Classification_BDT.class.C␛[0m

: TMVA_CNN_ClassificationOutput.root:/dataset/Method_BDT/BDT

Factory : Training finished

:

Factory : Train method: TMVA_DNN_CPU for Classification

:

: Start of deep neural network training on CPU using MT, nthreads = 4

:

: ***** Deep Learning Network *****

DEEP NEURAL NETWORK: Depth = 8 Input = ( 1, 1, 256 ) Batch size = 100 Loss function = C

Layer 0 DENSE Layer: ( Input = 256 , Width = 100 ) Output = ( 1 , 100 , 100 ) Activation Function = Relu

Layer 1 BATCH NORM Layer: Input/Output = ( 100 , 100 , 1 ) Norm dim = 100 axis = -1

Layer 2 DENSE Layer: ( Input = 100 , Width = 100 ) Output = ( 1 , 100 , 100 ) Activation Function = Relu

Layer 3 BATCH NORM Layer: Input/Output = ( 100 , 100 , 1 ) Norm dim = 100 axis = -1

Layer 4 DENSE Layer: ( Input = 100 , Width = 100 ) Output = ( 1 , 100 , 100 ) Activation Function = Relu

Layer 5 BATCH NORM Layer: Input/Output = ( 100 , 100 , 1 ) Norm dim = 100 axis = -1

Layer 6 DENSE Layer: ( Input = 100 , Width = 100 ) Output = ( 1 , 100 , 100 ) Activation Function = Relu

Layer 7 DENSE Layer: ( Input = 100 , Width = 1 ) Output = ( 1 , 100 , 1 ) Activation Function = Identity

: Using 1280 events for training and 320 for testing

: Compute initial loss on the validation data

: Training phase 1 of 1: Optimizer ADAM (beta1=0.9,beta2=0.999,eps=1e-07) Learning rate = 0.001 regularization 0 minimum error = 23.2412

: --------------------------------------------------------------

: Epoch | Train Err. Val. Err. t(s)/epoch t(s)/Loss nEvents/s Conv. Steps

: --------------------------------------------------------------

: Start epoch iteration ...

: 1 Minimum Test error found - save the configuration

: 1 | 0.93574 1.08324 0.106687 0.0105165 12477.8 0

: 2 Minimum Test error found - save the configuration

: 2 | 0.716362 0.940962 0.114932 0.0102958 11468.3 0

: 3 Minimum Test error found - save the configuration

: 3 | 0.592874 0.877019 0.109117 0.010207 12132.2 0

: 4 | 0.52324 0.903194 0.107113 0.011995 12615.9 1

: 5 Minimum Test error found - save the configuration

: 5 | 0.468663 0.86142 0.111005 0.0101821 11902 0

: 6 | 0.382985 0.930233 0.10505 0.009909 12612.8 1

: 7 Minimum Test error found - save the configuration

: 7 | 0.353522 0.834107 0.10618 0.0109391 12599.6 0

: 8 | 0.299244 0.861076 0.105327 0.00992027 12577.8 1

: 9 | 0.252929 0.880748 0.104905 0.0101775 12668 2

: 10 | 0.224682 0.877677 0.103255 0.00976718 12835.8 3

:

: Elapsed time for training with 1600 events: 1.09 sec

: Evaluate deep neural network on CPU using batches with size = 100

:

TMVA_DNN_CPU : [dataset] : Evaluation of TMVA_DNN_CPU on training sample (1600 events)

: Elapsed time for evaluation of 1600 events: 0.0516 sec

: Creating xml weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_DNN_CPU.weights.xml␛[0m

: Creating standalone class: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_DNN_CPU.class.C␛[0m

Factory : Training finished

:

Factory : Train method: TMVA_CNN_CPU for Classification

:

: Start of deep neural network training on CPU using MT, nthreads = 4

:

: ***** Deep Learning Network *****

DEEP NEURAL NETWORK: Depth = 7 Input = ( 1, 16, 16 ) Batch size = 100 Loss function = C

Layer 0 CONV LAYER: ( W = 16 , H = 16 , D = 10 ) Filter ( W = 3 , H = 3 ) Output = ( 100 , 10 , 10 , 256 ) Activation Function = Relu

Layer 1 BATCH NORM Layer: Input/Output = ( 10 , 256 , 100 ) Norm dim = 10 axis = 1

Layer 2 CONV LAYER: ( W = 16 , H = 16 , D = 10 ) Filter ( W = 3 , H = 3 ) Output = ( 100 , 10 , 10 , 256 ) Activation Function = Relu

Layer 3 POOL Layer: ( W = 15 , H = 15 , D = 10 ) Filter ( W = 2 , H = 2 ) Output = ( 100 , 10 , 10 , 225 )

Layer 4 RESHAPE Layer Input = ( 10 , 15 , 15 ) Output = ( 1 , 100 , 2250 )

Layer 5 DENSE Layer: ( Input = 2250 , Width = 100 ) Output = ( 1 , 100 , 100 ) Activation Function = Relu

Layer 6 DENSE Layer: ( Input = 100 , Width = 1 ) Output = ( 1 , 100 , 1 ) Activation Function = Identity

: Using 1280 events for training and 320 for testing

: Compute initial loss on the validation data

: Training phase 1 of 1: Optimizer ADAM (beta1=0.9,beta2=0.999,eps=1e-07) Learning rate = 0.001 regularization 0 minimum error = 23.2199

: --------------------------------------------------------------

: Epoch | Train Err. Val. Err. t(s)/epoch t(s)/Loss nEvents/s Conv. Steps

: --------------------------------------------------------------

: Start epoch iteration ...

: 1 Minimum Test error found - save the configuration

: 1 | 2.15699 0.899246 0.778613 0.0673831 1687.22 0

: 2 Minimum Test error found - save the configuration

: 2 | 0.864086 0.870808 0.805348 0.0652972 1621.51 0

: 3 Minimum Test error found - save the configuration

: 3 | 0.756129 0.749082 0.811639 0.0662186 1609.83 0

: 4 Minimum Test error found - save the configuration

: 4 | 0.707042 0.698368 0.792417 0.0723533 1666.52 0

: 5 Minimum Test error found - save the configuration

: 5 | 0.678996 0.693206 0.80337 0.0657681 1626.89 0

: 6 Minimum Test error found - save the configuration

: 6 | 0.672659 0.691974 0.8102 0.0663748 1613.28 0

: 7 Minimum Test error found - save the configuration

: 7 | 0.661462 0.685135 0.800702 0.0656736 1632.59 0

: 8 Minimum Test error found - save the configuration

: 8 | 0.650825 0.684344 0.811004 0.0660992 1610.94 0

: 9 | 0.642609 0.684729 0.812592 0.0651742 1605.53 1

: 10 Minimum Test error found - save the configuration

: 10 | 0.645377 0.669584 0.81211 0.0689347 1614.69 0

:

: Elapsed time for training with 1600 events: 8.11 sec

: Evaluate deep neural network on CPU using batches with size = 100

:

TMVA_CNN_CPU : [dataset] : Evaluation of TMVA_CNN_CPU on training sample (1600 events)

: Elapsed time for evaluation of 1600 events: 0.349 sec

: Creating xml weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_CNN_CPU.weights.xml␛[0m

: Creating standalone class: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_CNN_CPU.class.C␛[0m

Factory : Training finished

:

: Ranking input variables (method specific)...

BDT : Ranking result (top variable is best ranked)

: --------------------------------------

: Rank : Variable : Variable Importance

: --------------------------------------

: 1 : vars : 1.122e-02

: 2 : vars : 9.709e-03

: 3 : vars : 9.665e-03

: 4 : vars : 8.886e-03

: 5 : vars : 8.783e-03

: 6 : vars : 8.723e-03

: 7 : vars : 8.539e-03

: 8 : vars : 8.358e-03

: 9 : vars : 8.272e-03

: 10 : vars : 8.245e-03

: 11 : vars : 8.218e-03

: 12 : vars : 8.118e-03

: 13 : vars : 8.116e-03

: 14 : vars : 8.029e-03

: 15 : vars : 8.010e-03

: 16 : vars : 7.757e-03

: 17 : vars : 7.756e-03

: 18 : vars : 7.520e-03

: 19 : vars : 7.518e-03

: 20 : vars : 7.471e-03

: 21 : vars : 7.346e-03

: 22 : vars : 7.226e-03

: 23 : vars : 7.097e-03

: 24 : vars : 6.965e-03

: 25 : vars : 6.961e-03

: 26 : vars : 6.919e-03

: 27 : vars : 6.855e-03

: 28 : vars : 6.834e-03

: 29 : vars : 6.708e-03

: 30 : vars : 6.707e-03

: 31 : vars : 6.282e-03

: 32 : vars : 6.201e-03

: 33 : vars : 6.106e-03

: 34 : vars : 6.070e-03

: 35 : vars : 6.016e-03

: 36 : vars : 6.004e-03

: 37 : vars : 5.987e-03

: 38 : vars : 5.872e-03

: 39 : vars : 5.783e-03

: 40 : vars : 5.782e-03

: 41 : vars : 5.765e-03

: 42 : vars : 5.764e-03

: 43 : vars : 5.764e-03

: 44 : vars : 5.737e-03

: 45 : vars : 5.734e-03

: 46 : vars : 5.717e-03

: 47 : vars : 5.678e-03

: 48 : vars : 5.660e-03

: 49 : vars : 5.644e-03

: 50 : vars : 5.632e-03

: 51 : vars : 5.594e-03

: 52 : vars : 5.594e-03

: 53 : vars : 5.580e-03

: 54 : vars : 5.520e-03

: 55 : vars : 5.462e-03

: 56 : vars : 5.427e-03

: 57 : vars : 5.405e-03

: 58 : vars : 5.387e-03

: 59 : vars : 5.368e-03

: 60 : vars : 5.344e-03

: 61 : vars : 5.341e-03

: 62 : vars : 5.312e-03

: 63 : vars : 5.229e-03

: 64 : vars : 5.219e-03

: 65 : vars : 5.190e-03

: 66 : vars : 5.181e-03

: 67 : vars : 5.170e-03

: 68 : vars : 5.108e-03

: 69 : vars : 5.092e-03

: 70 : vars : 5.044e-03

: 71 : vars : 5.021e-03

: 72 : vars : 4.955e-03

: 73 : vars : 4.943e-03

: 74 : vars : 4.942e-03

: 75 : vars : 4.913e-03

: 76 : vars : 4.887e-03

: 77 : vars : 4.768e-03

: 78 : vars : 4.753e-03

: 79 : vars : 4.753e-03

: 80 : vars : 4.747e-03

: 81 : vars : 4.738e-03

: 82 : vars : 4.728e-03

: 83 : vars : 4.623e-03

: 84 : vars : 4.616e-03

: 85 : vars : 4.610e-03

: 86 : vars : 4.601e-03

: 87 : vars : 4.592e-03

: 88 : vars : 4.569e-03

: 89 : vars : 4.553e-03

: 90 : vars : 4.544e-03

: 91 : vars : 4.534e-03

: 92 : vars : 4.523e-03

: 93 : vars : 4.523e-03

: 94 : vars : 4.513e-03

: 95 : vars : 4.492e-03

: 96 : vars : 4.409e-03

: 97 : vars : 4.406e-03

: 98 : vars : 4.358e-03

: 99 : vars : 4.291e-03

: 100 : vars : 4.283e-03

: 101 : vars : 4.254e-03

: 102 : vars : 4.243e-03

: 103 : vars : 4.201e-03

: 104 : vars : 4.149e-03

: 105 : vars : 4.147e-03

: 106 : vars : 4.111e-03

: 107 : vars : 4.099e-03

: 108 : vars : 4.075e-03

: 109 : vars : 4.062e-03

: 110 : vars : 4.052e-03

: 111 : vars : 4.040e-03

: 112 : vars : 4.018e-03

: 113 : vars : 4.017e-03

: 114 : vars : 3.997e-03

: 115 : vars : 3.903e-03

: 116 : vars : 3.867e-03

: 117 : vars : 3.837e-03

: 118 : vars : 3.812e-03

: 119 : vars : 3.788e-03

: 120 : vars : 3.784e-03

: 121 : vars : 3.771e-03

: 122 : vars : 3.769e-03

: 123 : vars : 3.767e-03

: 124 : vars : 3.718e-03

: 125 : vars : 3.688e-03

: 126 : vars : 3.671e-03

: 127 : vars : 3.667e-03

: 128 : vars : 3.640e-03

: 129 : vars : 3.632e-03

: 130 : vars : 3.622e-03

: 131 : vars : 3.602e-03

: 132 : vars : 3.597e-03

: 133 : vars : 3.575e-03

: 134 : vars : 3.571e-03

: 135 : vars : 3.543e-03

: 136 : vars : 3.528e-03

: 137 : vars : 3.522e-03

: 138 : vars : 3.519e-03

: 139 : vars : 3.513e-03

: 140 : vars : 3.511e-03

: 141 : vars : 3.495e-03

: 142 : vars : 3.494e-03

: 143 : vars : 3.484e-03

: 144 : vars : 3.459e-03

: 145 : vars : 3.448e-03

: 146 : vars : 3.426e-03

: 147 : vars : 3.421e-03

: 148 : vars : 3.412e-03

: 149 : vars : 3.404e-03

: 150 : vars : 3.403e-03

: 151 : vars : 3.375e-03

: 152 : vars : 3.373e-03

: 153 : vars : 3.368e-03

: 154 : vars : 3.358e-03

: 155 : vars : 3.334e-03

: 156 : vars : 3.316e-03

: 157 : vars : 3.299e-03

: 158 : vars : 3.288e-03

: 159 : vars : 3.280e-03

: 160 : vars : 3.252e-03

: 161 : vars : 3.236e-03

: 162 : vars : 3.217e-03

: 163 : vars : 3.158e-03

: 164 : vars : 3.108e-03

: 165 : vars : 3.083e-03

: 166 : vars : 3.079e-03

: 167 : vars : 3.048e-03

: 168 : vars : 3.045e-03

: 169 : vars : 3.023e-03

: 170 : vars : 3.013e-03

: 171 : vars : 2.991e-03

: 172 : vars : 2.940e-03

: 173 : vars : 2.939e-03

: 174 : vars : 2.923e-03

: 175 : vars : 2.923e-03

: 176 : vars : 2.919e-03

: 177 : vars : 2.887e-03

: 178 : vars : 2.823e-03

: 179 : vars : 2.794e-03

: 180 : vars : 2.705e-03

: 181 : vars : 2.674e-03

: 182 : vars : 2.669e-03

: 183 : vars : 2.652e-03

: 184 : vars : 2.638e-03

: 185 : vars : 2.615e-03

: 186 : vars : 2.603e-03

: 187 : vars : 2.589e-03

: 188 : vars : 2.585e-03

: 189 : vars : 2.548e-03

: 190 : vars : 2.544e-03

: 191 : vars : 2.503e-03

: 192 : vars : 2.484e-03

: 193 : vars : 2.471e-03

: 194 : vars : 2.451e-03

: 195 : vars : 2.414e-03

: 196 : vars : 2.409e-03

: 197 : vars : 2.402e-03

: 198 : vars : 2.337e-03

: 199 : vars : 2.320e-03

: 200 : vars : 2.319e-03

: 201 : vars : 2.296e-03

: 202 : vars : 2.286e-03

: 203 : vars : 2.279e-03

: 204 : vars : 2.254e-03

: 205 : vars : 2.250e-03

: 206 : vars : 2.234e-03

: 207 : vars : 2.233e-03

: 208 : vars : 2.215e-03

: 209 : vars : 2.148e-03

: 210 : vars : 2.144e-03

: 211 : vars : 2.141e-03

: 212 : vars : 2.110e-03

: 213 : vars : 2.076e-03

: 214 : vars : 2.046e-03

: 215 : vars : 2.029e-03

: 216 : vars : 1.976e-03

: 217 : vars : 1.957e-03

: 218 : vars : 1.917e-03

: 219 : vars : 1.901e-03

: 220 : vars : 1.880e-03

: 221 : vars : 1.833e-03

: 222 : vars : 1.750e-03

: 223 : vars : 1.711e-03

: 224 : vars : 1.698e-03

: 225 : vars : 1.649e-03

: 226 : vars : 1.612e-03

: 227 : vars : 1.582e-03

: 228 : vars : 1.575e-03

: 229 : vars : 1.447e-03

: 230 : vars : 1.364e-03

: 231 : vars : 1.347e-03

: 232 : vars : 1.299e-03

: 233 : vars : 1.284e-03

: 234 : vars : 1.197e-03

: 235 : vars : 1.145e-03

: 236 : vars : 1.080e-03

: 237 : vars : 1.010e-03

: 238 : vars : 9.056e-04

: 239 : vars : 0.000e+00

: 240 : vars : 0.000e+00

: 241 : vars : 0.000e+00

: 242 : vars : 0.000e+00

: 243 : vars : 0.000e+00

: 244 : vars : 0.000e+00

: 245 : vars : 0.000e+00

: 246 : vars : 0.000e+00

: 247 : vars : 0.000e+00

: 248 : vars : 0.000e+00

: 249 : vars : 0.000e+00

: 250 : vars : 0.000e+00

: 251 : vars : 0.000e+00

: 252 : vars : 0.000e+00

: 253 : vars : 0.000e+00

: 254 : vars : 0.000e+00

: 255 : vars : 0.000e+00

: 256 : vars : 0.000e+00

: --------------------------------------

: No variable ranking supplied by classifier: TMVA_DNN_CPU

: No variable ranking supplied by classifier: TMVA_CNN_CPU

TH1.Print Name = TrainingHistory_TMVA_DNN_CPU_trainingError, Entries= 0, Total sum= 4.75024

TH1.Print Name = TrainingHistory_TMVA_DNN_CPU_valError, Entries= 0, Total sum= 9.04968

TH1.Print Name = TrainingHistory_TMVA_CNN_CPU_trainingError, Entries= 0, Total sum= 8.43617

TH1.Print Name = TrainingHistory_TMVA_CNN_CPU_valError, Entries= 0, Total sum= 7.32647

Factory : === Destroy and recreate all methods via weight files for testing ===

:

: Reading weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_BDT.weights.xml␛[0m

: Reading weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_DNN_CPU.weights.xml␛[0m

: Reading weight file: ␛[0;36mdataset/weights/TMVA_CNN_Classification_TMVA_CNN_CPU.weights.xml␛[0m

Factory : ␛[1mTest all methods␛[0m

Factory : Test method: BDT for Classification performance

:

BDT : [dataset] : Evaluation of BDT on testing sample (400 events)

: Elapsed time for evaluation of 400 events: 0.00351 sec

Factory : Test method: TMVA_DNN_CPU for Classification performance

:

: Evaluate deep neural network on CPU using batches with size = 400

:

TMVA_DNN_CPU : [dataset] : Evaluation of TMVA_DNN_CPU on testing sample (400 events)

: Elapsed time for evaluation of 400 events: 0.0125 sec

Factory : Test method: TMVA_CNN_CPU for Classification performance

:

: Evaluate deep neural network on CPU using batches with size = 400

:

TMVA_CNN_CPU : [dataset] : Evaluation of TMVA_CNN_CPU on testing sample (400 events)

: Elapsed time for evaluation of 400 events: 0.0868 sec

Factory : ␛[1mEvaluate all methods␛[0m

Factory : Evaluate classifier: BDT

:

BDT : [dataset] : Loop over test events and fill histograms with classifier response...

:

: Dataset[dataset] : variable plots are not produces ! The number of variables is 256 , it is larger than 200

Factory : Evaluate classifier: TMVA_DNN_CPU

:

TMVA_DNN_CPU : [dataset] : Loop over test events and fill histograms with classifier response...

:

: Evaluate deep neural network on CPU using batches with size = 1000

:

: Dataset[dataset] : variable plots are not produces ! The number of variables is 256 , it is larger than 200

Factory : Evaluate classifier: TMVA_CNN_CPU

:

TMVA_CNN_CPU : [dataset] : Loop over test events and fill histograms with classifier response...

:

: Evaluate deep neural network on CPU using batches with size = 1000

:

: Dataset[dataset] : variable plots are not produces ! The number of variables is 256 , it is larger than 200

:

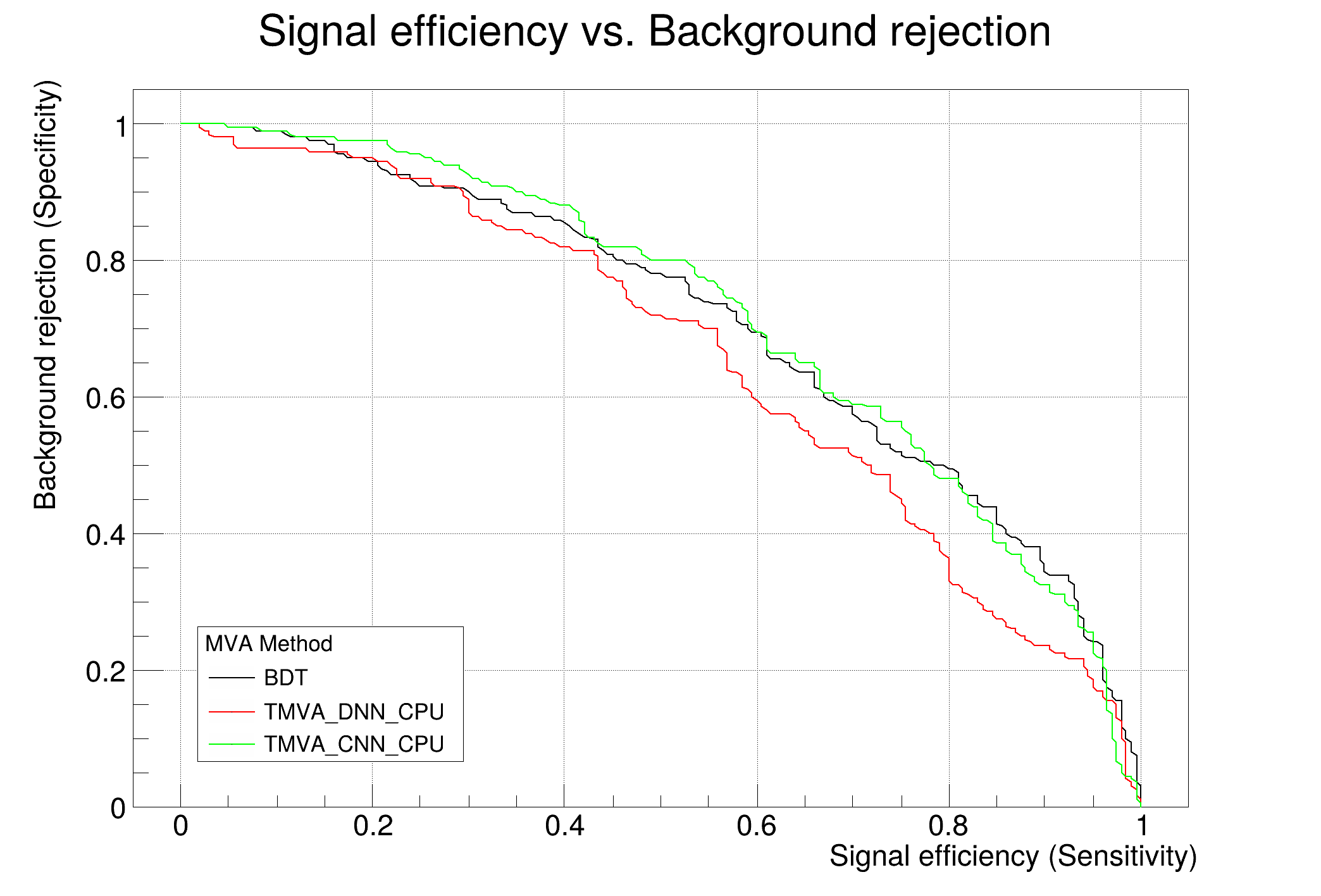

: Evaluation results ranked by best signal efficiency and purity (area)

: -------------------------------------------------------------------------------------------------------------------

: DataSet MVA

: Name: Method: ROC-integ

: dataset BDT : 0.780

: dataset TMVA_DNN_CPU : 0.688

: dataset TMVA_CNN_CPU : 0.618

: -------------------------------------------------------------------------------------------------------------------

:

: Testing efficiency compared to training efficiency (overtraining check)

: -------------------------------------------------------------------------------------------------------------------

: DataSet MVA Signal efficiency: from test sample (from training sample)

: Name: Method: @B=0.01 @B=0.10 @B=0.30

: -------------------------------------------------------------------------------------------------------------------

: dataset BDT : 0.155 (0.345) 0.438 (0.677) 0.691 (0.884)

: dataset TMVA_DNN_CPU : 0.065 (0.145) 0.280 (0.542) 0.608 (0.781)

: dataset TMVA_CNN_CPU : 0.045 (0.065) 0.186 (0.224) 0.475 (0.570)

: -------------------------------------------------------------------------------------------------------------------

:

Dataset:dataset : Created tree 'TestTree' with 400 events

:

Dataset:dataset : Created tree 'TrainTree' with 1600 events

:

Factory : ␛[1mThank you for using TMVA!␛[0m

: ␛[1mFor citation information, please visit: http://tmva.sf.net/citeTMVA.html␛[0m